From embeddings to evaluation: a practitioner’s guide to building RAG that actually works in production

When we started building AI-powered document Q&A into our products, we made the same mistake most teams make.

We picked a vector database. We embedded our documents. We wired up GPT-4. We called it RAG.

And for a while, it looked like it was working.

Then someone asked a question that spanned three sections of a report. The answer came back confidently wrong. Not hallucinated — the information was there, in the document. The system just couldn’t find the right pieces and stitch them together.

That’s when we realized the problem wasn’t our LLM. It wasn’t our vector database. It was everything that happened before retrieval — and everything we weren’t measuring after generation. Designing this layer correctly is critical for teams building scalable, production-ready systems through structured enterprise AI development services.

This is that story. And more importantly, this is what we learned.

The Problem With “Just Add RAG”

RAG — Retrieval-Augmented Generation — sounds straightforward: retrieve relevant documents, pass them to an LLM, get a grounded answer. No hallucinations, no stale training data.

In practice, it’s a pipeline with at least five places where things can silently break.

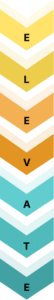

Here’s what a naive RAG system looks like:

User Query → Embed Query → Vector Search → Top-K Chunks → LLM → Response

Each arrow is a potential failure point. And most teams only optimize the last one — the LLM.

What LLMs Can and Can’t Do Alone

Before we talk about RAG, let’s be clear about why it exists.

Large Language Models are trained on static text snapshots. They’re remarkably good at reasoning, summarizing, and generating — but they have three fundamental limitations that matter in production:

Knowledge cutoff. The model knows nothing about what happened after its training data were collected. For enterprise applications dealing with internal documents, policy updates, or real-time data, this is a dealbreaker.

No private knowledge. The model was never trained on your company’s documents, your product specs, or your customer data. You can’t fix this with fine-tuning alone — fine-tuning teaches behavior, not facts.

Hallucination under uncertainty. When an LLM doesn’t know something, it often doesn’t say “I don’t know.” It generates a plausible-sounding answer. This is fine for creative writing. It’s catastrophic for document Q&A, compliance, or medical applications.

RAG solves all three. But only if you build it correctly.

Why RAG? The Actual Value Proposition

The core idea: at inference time, retrieve relevant context from an external knowledge base and give it to the LLM as part of the prompt. The model then generates an answer grounded in what was retrieved — not what it vaguely remembers from training.

Without RAG: LLM answers from parametric memory → hallucination risk

With RAG: LLM answers from retrieved context → grounded, verifiable

This unlocks three things that matter for enterprise deployments:

Updatability without retraining. Update your knowledge base, and the system immediately knows the new information. No fine-tuning cycle, no redeployment.

Attribution. Every answer can be traced back to a source document. This is critical for compliance, legal, and regulated industries.

Cost efficiency. You don’t need the largest, most expensive model when the answer is literally in the context window. A smaller model with good retrieval outperforms a larger model with bad retrieval.

But here’s what the tutorials don’t tell you: the quality of your RAG system is almost entirely determined by what happens before the LLM sees the query. Retrieval quality is the dominant variable.

Where Naive RAG Fails

RAG solves the LLM’s knowledge problem. But naive RAG — embed, retrieve top-K, generate — introduces its own failure modes. And these failures are silent: the system returns an answer, it just happens to be wrong.

Understanding these failure modes matters because every advanced technique covered in this guide exists to address one or more of them.

| Failure Mode | What Happens | Example |

| Vocabulary Mismatch | User phrasing doesn’t match document phrasing | User asks “salary” but the document says “compensation package” |

| Ambiguous Queries | Query is too vague or has multiple valid interpretations | “Tell me about Python” — the snake, or the programming language? |

| Lost in the Middle | LLM underweights relevant chunks positioned in the middle of a long context | Correct answer is chunk 3 of 5 — the model effectively ignores it |

| Irrelevant Top-K | Cosine similarity returns semantically close but contextually wrong chunks | Returns results about “ML model training” when the question is about “ML model deployment” |

| Chunking Artifacts | Answer split across chunk boundaries — retrieved in neither | The explanation starts in chunk 7 and concludes in chunk 8 |

| No Answer Available | System hallucinates rather than admitting it doesn’t know | Generates a confident answer from tangentially related chunks |

Each section that follows — hybrid retrieval, advanced chunking, HyDE, reranking, evaluation — is a direct response to one or more rows in this table. Keep this map in mind as you read.

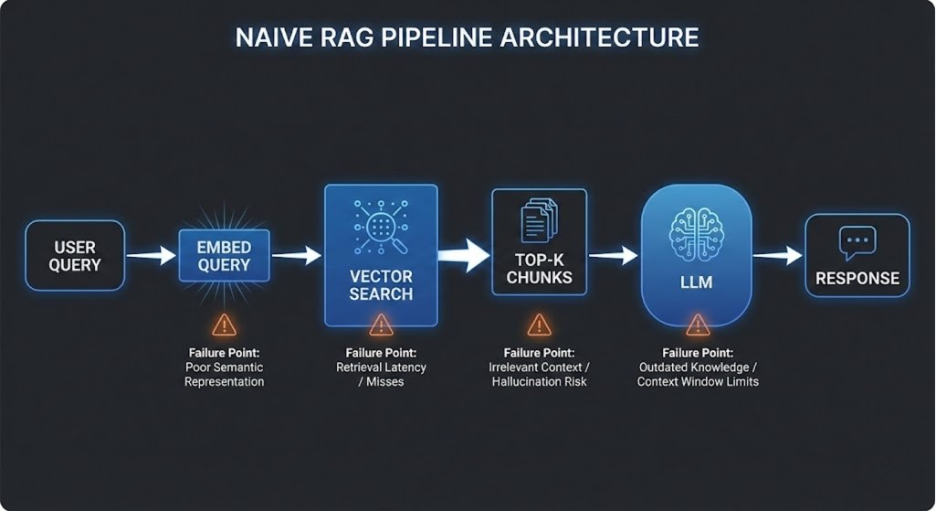

Hybrid RAG: Beyond Pure Vector Search

Most RAG tutorials show you one retrieval method: embed your query, find the nearest vectors, return the top-K results.

This is called dense retrieval — and it’s powerful but incomplete.

The Problem With Pure Vector Search

Dense retrieval is excellent at semantic similarity. Ask “what are the revenue figures for Q3?” and it finds chunks about financial performance even if they don’t contain that exact phrase.

But it fails on exact lookup. If you search for “Section 4.2.1” or “SKU-B4920” — a specific ID, a clause number, a product code — dense retrieval can miss it entirely. The embedding doesn’t capture character-level precision.

The Problem With Pure Keyword Search

BM25 and traditional keyword search (what Elasticsearch has used for decades) does the opposite. It’s extremely good at exact matches but fails on semantics. Search “how does the system handle payment failures?” and it might return nothing if the document says “transaction error recovery process” instead.

Hybrid = Best of Both

The solution is to run both in parallel and merge results. This is Hybrid RAG. In production systems, implementing hybrid retrieval effectively requires strong data engineering for AI systems, where indexing strategy, metadata design, and search orchestration directly impact retrieval accuracy.

Query

├── Dense Retrieval (embedding similarity) → candidates_A

└── Sparse Retrieval (BM25 keyword search) → candidates_B

↓

Reciprocal Rank Fusion (RRF)

↓

Unified Ranked Results

↓

(Optional) Reranker

↓

Final Top-K → LLM

Reciprocal Rank Fusion (RRF) is the standard method for merging ranked lists from different retrievers. It’s simple and surprisingly effective — each result gets a score of 1/(rank + k) from each retriever, and scores are summed to produce a final ranking.

The Third Layer: Reranking

After fusion, you still have 10-20 candidates. A reranker (cross-encoder) scores each retrieved chunk against the query — not just for embedding similarity, but for actual relevance.

Rerankers are slower than retrieval but more accurate. They process query+document pairs end-to-end, capturing nuance that bi-encoder embeddings miss. Models like Cohere Rerank, BGE Reranker, and Jina Reranker have become standard in production pipelines.

The flow: retrieve broadly → rerank precisely → send top 3-5 to LLM.

Embeddings: The Layer Everyone Rushes Past

Before you can retrieve anything, you need to represent your documents as vectors. The embedding model you choose matters more than most teams realize.

What Embeddings Actually Are

An embedding model converts text into a high-dimensional vector (typically 768 to 3072 dimensions). Semantically similar text produces geometrically close vectors. This is what enables semantic search.

Choosing an Embedding Model

Not all embedding models are created equal. Choosing the wrong one — especially for domain-specific content — can silently degrade your entire RAG system.

| Model | Dimensions | Best For |

| text-embedding-3-small (OpenAI) | 1536 | General purpose, fast |

| text-embedding-3-large (OpenAI) | 3072 | Higher accuracy, more cost |

| BGE-M3 (BAAI) | 1024 | Multilingual, hybrid retrieval built-in |

| E5-Large-v2 | 1024 | Strong on MTEB benchmarks |

| Nomic Embed | 768 | Open source, self-hostable |

| Jina Embeddings v3 | 1024 | Long documents, late chunking support |

For specialized domains — legal, medical, code — domain-specific or fine-tuned embeddings consistently outperform general-purpose models.

One Critical Thing Most Guides Skip: Embedding Dimensions Matter

Larger embedding dimensions capture more nuance — but they’re also more expensive to store, index, and query. For most applications, text-embedding-3-small at 1536 dimensions is the right starting point. Upgrade only when benchmarks show clear improvement.

Late Chunking: A Better Way to Embed Long Documents

Traditional embedding: split the document into chunks, embed each chunk independently.

The problem: each chunk loses awareness of the document it came from. A chunk saying “the figure improved by 34%” has no context of what figure, in what context.

Late Chunking (introduced by Jina AI) inverts this: embed the full document first using long-context models, then split the resulting token-level embeddings into chunks. Each chunk retains the global context of the document it belongs to.

The result: significantly better retrieval on long, structured documents where cross-section relationships matter.

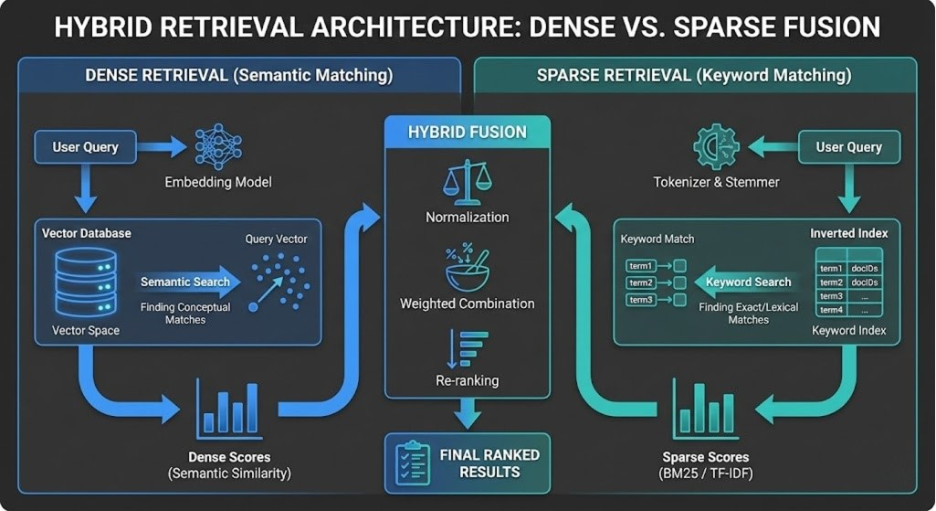

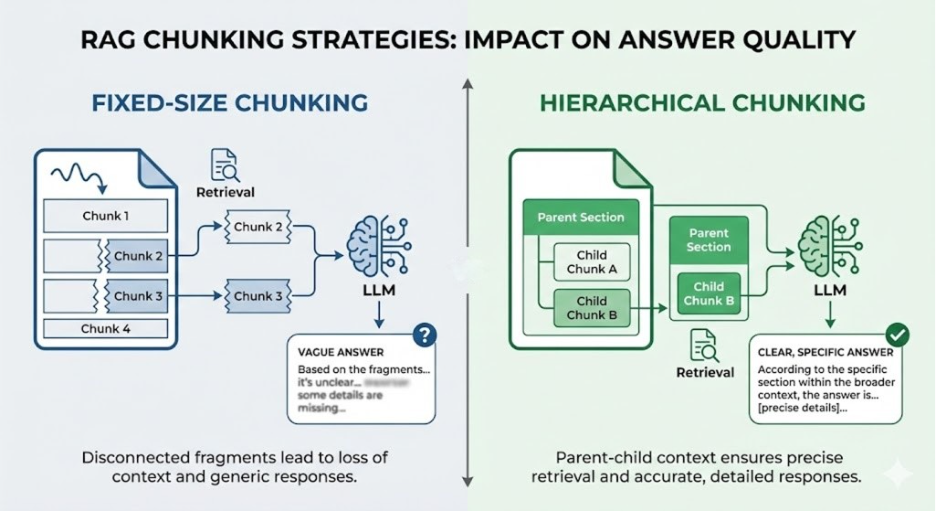

Chunking: The Most Underrated Decision in RAG

Here’s the finding that should change how you think about RAG:

Chunking strategy matters more than your choice of vector database.

NVIDIA’s 2024 benchmarks across five document datasets found up to a 9% recall gap between the best and worst chunking strategies, with no other variable changed. A well-chunked corpus retrieves accurately on a basic vector DB. A poorly-chunked corpus fails even with the most sophisticated retrieval stack.

Yet most teams spend weeks evaluating vector databases and minutes thinking about chunking.

Why Chunking Is Hard

You’re trying to solve a fundamental tension:

- Chunks too large → embeddings average multiple topics → noisy retrieval → the system retrieves the right page but not the right answer

- Chunks too small → each chunk loses context → the answer exists but can’t be understood in isolation

The goal: chunks small enough to retrieve precisely, but complete enough to give the LLM sufficient context for generation.

Strategy 1: Fixed-Size Chunking

The simplest approach. Split every N tokens, with some overlap to avoid context loss at boundaries.

chunk_size = 512 tokens

chunk_overlap = 50 tokens

Easy to implement. Fast. The baseline every team starts with.

The problem: it’s document-agnostic. A sentence that starts at token 500 gets split at token 512, mid-thought. The next chunk has no idea what the previous one was discussing.

Use when: Homogeneous, unstructured text. FAQs. Simple corpora where you need something running quickly.

Don’t use when: Documents have natural structure — sections, headings, slides, tables.

Strategy 2: Recursive Character Text Splitting

The LangChain default. Instead of splitting blindly at N tokens, it tries to split at natural boundaries — paragraphs first, then sentences, then words — only falling back to character-level splits when necessary.

In Chroma’s benchmarks, RecursiveCharacterTextSplitter at 400-512 tokens delivered 85-90% recall without the computational overhead of semantic methods. It’s a strong default for most teams.

Use when: Prose-heavy documents, articles, reports, general text corpora.

Strategy 3: Semantic Chunking

Instead of counting tokens, semantic chunking analyzes the embedding similarity between consecutive sentences. When similarity drops significantly — meaning the topic is shifting — it creates a new chunk boundary.

The result: chunks that respect topic boundaries, not arbitrary token counts. Each chunk contains one coherent idea.

The cost: requires embedding every sentence during preprocessing, which is computationally expensive. And it introduces a threshold parameter (how much similarity drop triggers a split?) that requires tuning per document type.

Benchmarks show up to 9% improvement in recall over fixed-size chunking for well-structured documents.

Use when: Academic papers, long-form reports, content where topic coherence within chunks matters.

Strategy 4: Hierarchical Chunking

This is the approach that changes everything for structured documents.

The idea: documents have a natural hierarchy. A research paper has sections → subsections → paragraphs. A presentation has slides → sections → bullets. A legal document has articles → clauses → provisions.

Hierarchical chunking preserves this structure explicitly:

Parent Node: Full Section (context for generation)

Child Nodes: Individual Paragraphs (precision for retrieval)

At query time:

1. Retrieve child chunk (precise match)

2. Return parent chunk to LLM (full context)

This is sometimes called Parent Document Retrieval — retrieve small, generate with large.

The result: you get the retrieval precision of small chunks and the contextual completeness of large chunks. You don’t have to choose.

Use when: Presentations, reports with sections, legal documents, technical documentation, anything with meaningful structure. We cover this in depth in the case study below.

Strategy 5: Page-Level Chunking

In NVIDIA’s 2024 benchmark, this won — 0.648 accuracy with the lowest variance across document types.

The reason: PDFs organize information by page deliberately. Financial reports put balance sheets on one page. Research papers put figures with their captions on the same page. Splitting across page boundaries destroys these relationships.

Use when: PDF-heavy corpora, financial reports, regulatory filings, academic papers.

Don’t use when: Your PDFs are just text exports with arbitrary page breaks — the page boundary carries no semantic meaning.

Strategy 6: Proposition-Based Chunking

Instead of splitting on structure or semantics, an LLM extracts atomic factual claims — “propositions” — from each passage. Each proposition becomes a chunk.

Original: “Revenue grew 34% YoY, driven primarily by enterprise subscriptions

and geographic expansion into Southeast Asia.”

Propositions:

→ “Revenue grew 34% year-over-year.”

→ “Revenue growth was driven by enterprise subscriptions.”

→ “Revenue growth was driven by geographic expansion into Southeast Asia.”

The result: extremely high retrieval precision. Each chunk is a single, self-contained fact — no noise, no mixed topics.

The cost: expensive. Requires LLM calls during preprocessing. Not practical for large corpora at scale without careful budgeting.

Use when: High-stakes, precision-critical applications. Legal Q&A, compliance checking, medical decision support. When incorrect retrieval has real consequences.

Chunking Strategy Selection Guide

| Document Type | Recommended Strategy | Why |

| PDFs (structured) | Page-level | Respects visual organization |

| Research papers | Semantic + Hierarchical | Topic coherence + section context |

| Presentations / Slides | Hierarchical | Structure is the meaning |

| Legal documents | Proposition-based | Precision is non-negotiable |

| FAQs / Chat logs | Sentence-level | Self-contained questions |

| Code documentation | Recursive (language-aware) | AST-aware boundaries |

| Mixed / Unstructured | Contextual Retrieval | Adds context regardless of structure |

Two More Methods Worth Knowing

Contextual Retrieval (Anthropic, 2024): Before embedding, prepend a generated context summary to each chunk — e.g., “This chunk is from Section 3 of the Q3 earnings report, discussing operating margins.” This anchors the chunk in its document context without changing the chunk boundaries. Particularly effective when combined with BM25 hybrid search.

Cluster-Based / Agentic Chunking: Uses optimization algorithms (or LLM agents) to find chunk boundaries that maximize a “semantic reward” — coherence within chunks, dissimilarity across chunks. Computationally expensive but produces the best boundaries for complex documents.

Retrieval Strategies: Beyond Top-K

Once your corpus is chunked and indexed, how you actually retrieve matters.

Naive Top-K

Return the K most similar chunks to the query embedding. Simple. Works for simple queries.

Fails when: the question requires synthesizing information from multiple sections, or when the K most similar chunks are all slight variations of the same passage (redundant retrieval).

MMR — Maximal Marginal Relevance

Returns diverse results rather than the K most similar. After selecting the top result, each subsequent result is chosen to maximize relevance and minimize overlap with already-selected chunks.

The result: a retrieval set that covers different aspects of the answer, not five versions of the same paragraph.

Use when: Summarization tasks, broad questions, anytime redundancy is a concern.

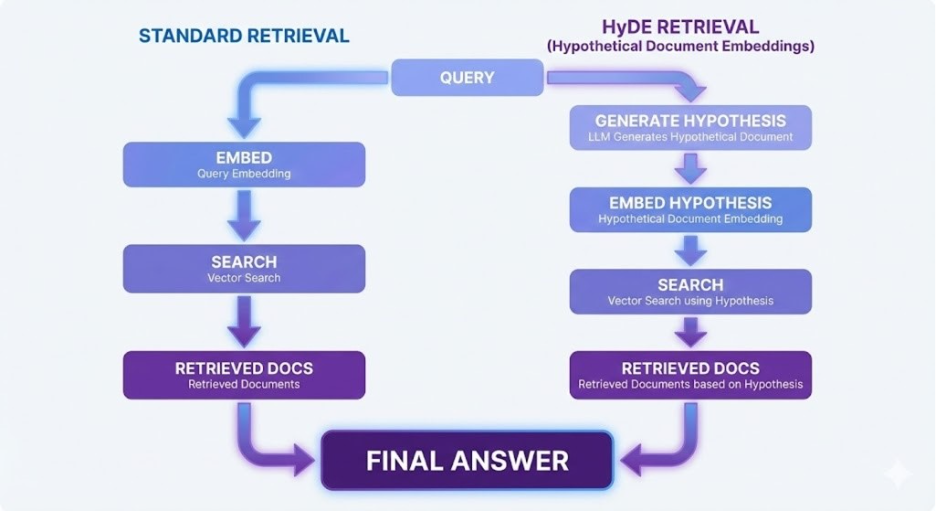

HyDE — Hypothetical Document Embeddings

One of the most underrated techniques in advanced RAG.

The problem: there’s often a distribution gap between queries and documents. A user asks a question. Documents contain answers. Questions and answers are phrased very differently — and this mismatch degrades embedding similarity.

HyDE closes this gap:

1. Take the user’s query

2. Generate a hypothetical answer using the LLM (no retrieval yet)

3. Embed the hypothetical answer

4. Use that embedding to search the vector store

5. Retrieve real chunks closest to the hypothetical answer

6. Pass retrieved chunks + original query to LLM for final generation

The hypothetical answer is never shown to the user. It’s just a better search vector.

- In benchmarks, HyDE improves retrieval performance significantly on knowledge-intensive tasks, especially when queries are short and vague while documents are detailed.

Multi-Query Retrieval

Generate N semantically different paraphrases of the user’s query, retrieve chunks for each, deduplicate, and merge. Catches chunks that would be missed if the user’s specific phrasing didn’t match the document’s phrasing.

Sub-Query Decomposition

Complex, multi-part questions are often too broad for a single retrieval pass. Sub-Query Decomposition breaks the original query into smaller, focused sub-queries, retrieves independently for each, then synthesizes the results before passing them to the LLM.

Original: “Compare the machine learning approaches used by Google and Meta

for content recommendation”

Sub-queries:

→ “Google machine learning content recommendation system”

→ “Meta machine learning recommendation approach”

→ “Comparison of recommendation systems in big tech”

Each sub-query targets a specific slice of the answer. The retrieved chunks are merged and deduplicated, giving the LLM complete, structured context to reason across — rather than forcing it to infer comparisons from a single ambiguous retrieval.

Use when: Questions that span multiple entities, time periods, or concepts. Comparative analysis, multi-hop reasoning, research queries.

Step-Back Prompting

For narrow, specific queries, retrieval often misses the broader context. Step-Back prompting first abstracts the query — “What are the principles behind X?” — retrieves on the abstracted query, then uses both abstract context and specific query for generation.

Useful when questions are highly specific but require background understanding.

Self-RAG

The model itself decides whether to retrieve and what to retrieve. It generates a response, evaluates whether it needs external information, retrieves if necessary, and iterates. More agentic. More expensive. Significantly better at multi-hop reasoning.

Evaluating RAG: What Actually Matters

Here’s where most teams are completely flying blind.

Teams deploy RAG, try a few queries manually, and call it good. They have no metrics. They don’t know if their system is getting better or worse as they iterate. Evaluation should be part of broader LLM infrastructure best practices, not something added after deployment.

Why BLEU and ROUGE Are Not Enough

BLEU and ROUGE measure n-gram overlap between a generated answer and a reference answer. They were designed for machine translation and summarization — where the output should look like the reference.

RAG has different requirements. A correct answer that uses different phrasing from the reference will score poorly on BLEU. An answer that sounds similar but uses wrong facts from the document will score well.

You need metrics that evaluate factual grounding, not surface similarity.

The Three Evaluation Layers

Layer 1: Retrieval Quality

→ Is the right information being found?

Layer 2: Generation Quality

→ Is the LLM using what was retrieved correctly?

Layer 3: End-to-End Quality

→ Does the final answer actually answer the question?

| Layer | Metric | What It Measures |

| Retrieval | Precision@K | What fraction of retrieved chunks are actually relevant? |

| Retrieval | Recall@K | What fraction of relevant chunks were retrieved? |

| Retrieval | MRR | Is the first relevant chunk ranked high? |

| Retrieval | nDCG | Is the overall ranking quality good? |

| Generation | BERTScore | Semantic similarity (better than BLEU for RAG) |

| Generation | ROUGE | Token-level overlap with reference |

| End-to-end | Faithfulness | Does the answer stick to retrieved context? |

| End-to-end | Answer Relevancy | Does the answer address the question? |

| End-to-end | Context Precision | Was the retrieved context actually useful? |

| End-to-end | Context Recall | Did we retrieve all the context needed? |

RAGAS: Automated RAG Evaluation

RAGAS (Retrieval Augmented Generation Assessment) is the standard framework for automated RAG evaluation. Its key insight: you don’t need human-annotated ground truth for every metric. LLM-as-judge can evaluate faithfulness, relevance, and context quality at scale.

from ragas import evaluate

from ragas.metrics import faithfulness, answer_relevancy, context_precision

results = evaluate(

dataset=test_dataset,

metrics=[faithfulness, answer_relevancy, context_precision]

)

Faithfulness is the most critical metric. It measures whether every claim in the generated answer is grounded in the retrieved context. A faithfulness score below 0.8 means your LLM is hallucinating — even when retrieval is working.

Answer Relevancy measures whether the answer addresses the actual question. A system can be perfectly faithful (sticking to retrieved context) but still irrelevant if it retrieves the wrong content.

Context Precision tells you if your retrieval is returning noise alongside relevant chunks. High precision means everything retrieved is useful. Low precision means your reranking needs work.

Other evaluation tools: DeepEval (uses G-Eval with custom rubrics), TruLens (focuses on the “RAG triad” of groundedness, answer relevance, context relevance), Arize Phoenix (production monitoring and tracing).

Case Study: Why Hierarchical Chunking Is Essential for Presentation-Based AI

This isn’t theoretical. This is a problem we hit building AI-powered Q&A over university presentation content.

The Setup

Universities share large volumes of structured content with students — course presentations, placement preparation decks, syllabus documents. These presentations have a clear hierarchy:

Presentation

└── Section: “Placement Preparation”

└── Slide: “Interview Skills”

└── Bullet: “Maintain eye contact with the interviewer”

└── Bullet: “Structure answers using STAR method”

└── Sub-bullet: “Situation → Task → Action → Result”

A student asks: “What technique should I use to answer behavioral interview questions?”

The correct answer is the STAR method. It lives in a sub-bullet on slide 7 of a 45-slide presentation.

What Naive Chunking Does

With 512-token fixed-size chunking, the processor doesn’t know about slides, sections, or bullets. It splits the text mechanically.

The sub-bullet “Situation → Task → Action → Result” might end up in a chunk that looks like:

…communication skills important for success in technical roles.

Situation → Task → Action → Result. Practice with common behavioral

questions before your interview. Research the company thoroughly…

When retrieved, this chunk gets passed to the LLM. The LLM sees fragments from three different bullet points. It generates an answer that’s vague, inconsistent, or misattributed. The STAR method might be mentioned, but without the context that it’s specifically for behavioral questions and interview preparation.

What Hierarchical Chunking Does

With hierarchical indexing, the structure is preserved:

Parent Node (stored, not retrieved):

“Section: Placement Preparation → Slide: Interview Skills — This slide

covers structured communication techniques for interview success,

including the STAR method for behavioral questions.”

Child Node (indexed for retrieval):

“Use the STAR method to answer behavioral interview questions:

Situation → Task → Action → Result.”

Query matches the child node precisely. The LLM receives the parent node as context — so it knows why STAR is being discussed, where in the curriculum it appears, and what it applies to.

The response is specific, contextually correct, and attributable.

The Numbers

Across our internal benchmark on 200 student queries over 15 university presentation decks:

| Chunking Strategy | Answer Accuracy | Faithfulness | Context Precision |

| Fixed-size (512 tokens) | 61% | 0.74 | 0.58 |

| Semantic chunking | 72% | 0.81 | 0.69 |

| Hierarchical (parent-child) | 89% | 0.91 | 0.84 |

The hierarchical approach didn’t just improve accuracy — it specifically improved the cases that matter most: questions that require understanding where a piece of information sits in the document, not just that it exists.

The Broader Principle

Any time your document’s structure carries meaning — presentations, legal contracts with numbered clauses, technical specs with sections and subsections, financial reports with organized statements — hierarchical chunking is not optional. It’s the only approach that respects what the document is actually saying.

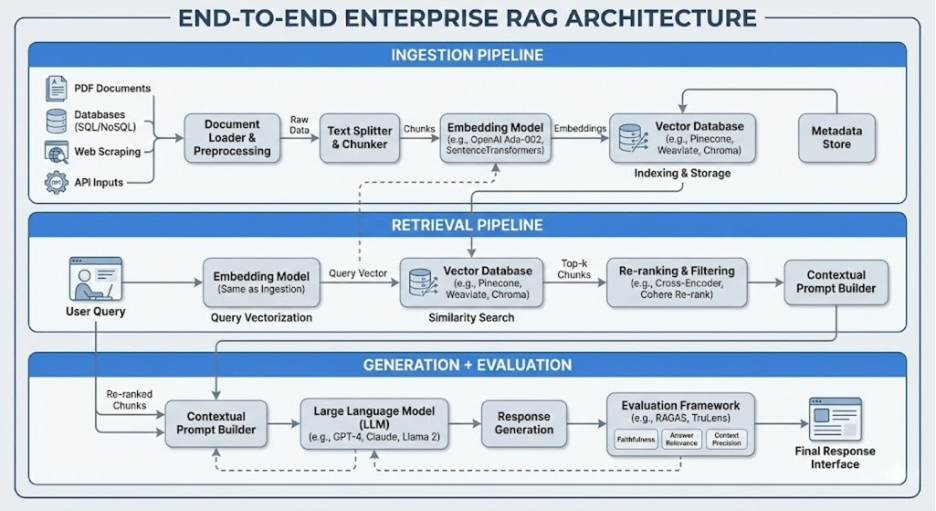

The Full Production RAG Stack

Here’s what a production-grade RAG system actually looks like, integrated end-to-end:

INGESTION PIPELINE

──────────────────

Raw Documents → Document Parser → Structure Detection

→ Chunking Strategy (per doc type) → Embedding Model

→ Vector Store + Keyword Index

RETRIEVAL PIPELINE

──────────────────

User Query → Query Analysis → [HyDE / Multi-Query]

→ Dense Retrieval + Sparse Retrieval → RRF Fusion

→ Reranker → Top-K Chunks

GENERATION PIPELINE

──────────────────

Top-K Chunks + Query → Context Assembly → LLM

→ Response → Citation Extraction

EVALUATION LAYER

────────────────

RAGAS: Faithfulness / Answer Relevancy / Context Precision

Retrieval: Precision@K / Recall@K / MRR

Production: Latency / Throughput / Cost per Query

Common RAG Anti-Patterns (And How to Avoid Them)

After building and debugging RAG in production, these are the mistakes we see most often:

Skipping retrieval evaluation. Teams measure end-to-end accuracy but never separately measure whether retrieval is working. A system can fail because of bad chunking, bad embeddings, bad retrieval, or bad generation — and without layer-specific metrics, you’re debugging blindly.

One chunking strategy for all document types. A single 512-token splitter applied to PDFs, slides, legal docs, and code. Each document type has different structure. The right chunking strategy depends on what the document is, not just how long it is.

No overlap in fixed-size chunks. Information split at the boundary of two chunks exists in neither. At minimum, 10-15% overlap prevents this. Hierarchical chunking is the proper fix.

Embedding model mismatch. Using a general-purpose embedding model for highly specialized content (medical, legal, technical) without checking whether a domain-specific model performs better.

Top-K without reranking. Embedding similarity is a proxy for relevance, not relevance itself. A reranker as the second stage significantly improves final context quality with minimal latency cost.

Measuring faithfulness without measuring retrieval. An LLM that faithfully echoes retrieved content still gives wrong answers if retrieval returned the wrong chunks. Faithfulness and retrieval quality are independent — you need to measure both.

Choosing the Right Approach for Your Use Case

| Use Case | Chunking | Retrieval | Key Metric |

| Enterprise document Q&A | Hierarchical | Hybrid + Rerank | Faithfulness |

| Customer support | Sentence-level | Dense + MMR | Answer Relevancy |

| Legal / Compliance | Proposition-based | Dense + BM25 | Context Precision |

| Research assistance | Semantic | HyDE + Multi-Query | Recall@K |

| Presentation / Slide AI | Hierarchical (mandatory) | Hybrid + Rerank | Answer Accuracy |

| Code documentation | Recursive (AST-aware) | Dense | Exact Match |

What We’ve Learned

RAG is not a component you add — it’s a pipeline you architect. The decisions you make at ingestion time (how you chunk, how you embed) determine the ceiling of what’s possible at retrieval and generation time. For organizations building enterprise-grade AI solutions, this means treating Retrieval-Augmented Generation as a full-stack system — from ingestion and retrieval to evaluation and monitoring.

The teams that build RAG that works in production are not the ones who picked the best vector database. They’re the ones who spent time understanding their documents, matched chunking strategy to document structure, measured retrieval quality independently, and iterated based on data.

The LLM at the end of the pipeline is only as good as what you give it. Give it the right context, and it will give you the right answer.

How 47Billion Approaches RAG Engineering

At 47Billion, we’ve built RAG pipelines across industries — EdTech, HR Tech, enterprise knowledge management — each with different document types, different query patterns, and different accuracy requirements.

Our approach is systematic: we assess document structure before choosing chunking strategy, we instrument retrieval and generation separately, and we benchmark against domain-specific baselines before production deployment.

| Capability | What We Deliver |

| RAG Architecture Design | Chunking strategy, embedding selection, vector store setup |

| Hybrid Retrieval Implementation | Dense + sparse + reranking pipeline |

| Evaluation Framework | RAGAS integration, custom metrics, monitoring dashboards |

| Domain Adaptation | Fine-tuned embeddings, domain-specific chunking |

| Production Optimization | Latency tuning, cost optimization, scaling |

If your RAG system isn’t performing the way you expected — or you’re building one from scratch and want to get the architecture right from the start — let’s talk.

© 2025 47Billion. All rights reserved.