The Promise Was Simple. The Reality Was Not.

Every conference talk in 2025 had the same pitch: AI agents will automate everything. Give an LLM some tools, define a goal, and watch it work. The demos were impressive. Multi-agent systems writing code, analyzing data, generating reports—all without human intervention.

We believed the pitch. We had good reason to. At 7Seers, our AI-powered education platform, we were already running dozens of LLM-driven features across multiple micro-services. Content generation, assessment creation, mock interviews. Adding agent capabilities felt like the natural next step.

So we went deep. Three frameworks. Multiple proof-of-concept projects. A production deployment for a global insurance company. Four months of building, testing, and learning.

What we discovered was both humbling and clarifying: the agent landscape in 2025 is simultaneously more capable and more fragile than the marketing suggests. The frameworks are real. The patterns are sound. But the gap between a compelling demo and a reliable production system is wider than anyone at those conferences was willing to admit.

This is what we learned.

Before We Begin: What Actually Is an AI Agent?

The term gets thrown around loosely, so let us be precise. An AI agent is software that can perceive its environment, reason about what to do, act to achieve a goal, and learn from feedback. The key difference from a chatbot is autonomy. A chatbot answers questions. An agent accomplishes tasks.

The academic classification includes five types, and understanding them helps frame what modern LLM agents actually do:

| Type | How It Works | Example |

| Simple Reflex | If-then rules, no memory | Thermostat, email spam filter |

| Model-Based | Maintains internal state, tracks context | Navigation system remembering your location |

| Goal-Based | Plans actions to achieve specific objectives | GPS finding the fastest route |

| Utility-Based | Optimizes for the best outcome among options | Netflix recommendation engine |

| Learning | Improves performance over time from experience | Alexa getting better at understanding you |

Most modern LLM-based agents are a combination of goal-based, utility-based, and learning architectures. They plan, they optimize, and they get better with use. At least, that is the aspiration.

The ReAct Pattern: How Modern Agents Think

At the heart of every agent framework we tested is a pattern called ReAct—short for Reasoning and Acting. It is the loop that makes agents feel intelligent:

The agent receives a task. It thinks about what to do next (reasoning). It acts by calling a tool, an API, or performing a search. It observes the result. Then it thinks again. This loop continues until the goal is achieved or the agent decides it cannot proceed.

Here is what that looks like in practice for a mock interview agent:

- Thought: “User wants Python developer interview practice. I need role-specific questions.”

- Action: Call question generator with role=Python Developer, level=Mid

- Observation: Received 5 questions covering data structures, OOP, concurrency

- Thought: “Good questions. Present the first one and wait for the response.”

- Action: Present question, record user’s spoken answer

- Observation: User discussed list comprehensions but missed generator expressions

- Thought: “Evaluate against rubric. Score and provide constructive feedback.”

- Action: Call evaluation tool with answer and rubric

- Observation: Score 7/10. Feedback generated. Move to next question.

This loop is what separates agents from simple prompt-response systems. Every framework we tested—AutoGen, CrewAI, LlamaIndex, OpenAI Agents SDK—implements some variant of ReAct. Understanding this pattern is essential for debugging agents when they inevitably go wrong. We explore similar orchestration challenges in our guide on building enterprise-grade LLM infrastructure.

The quality of an agent depends more on how well its components are integrated than on the intelligence of the underlying LLM. A mediocre model with excellent tooling outperforms a brilliant model with poor orchestration.

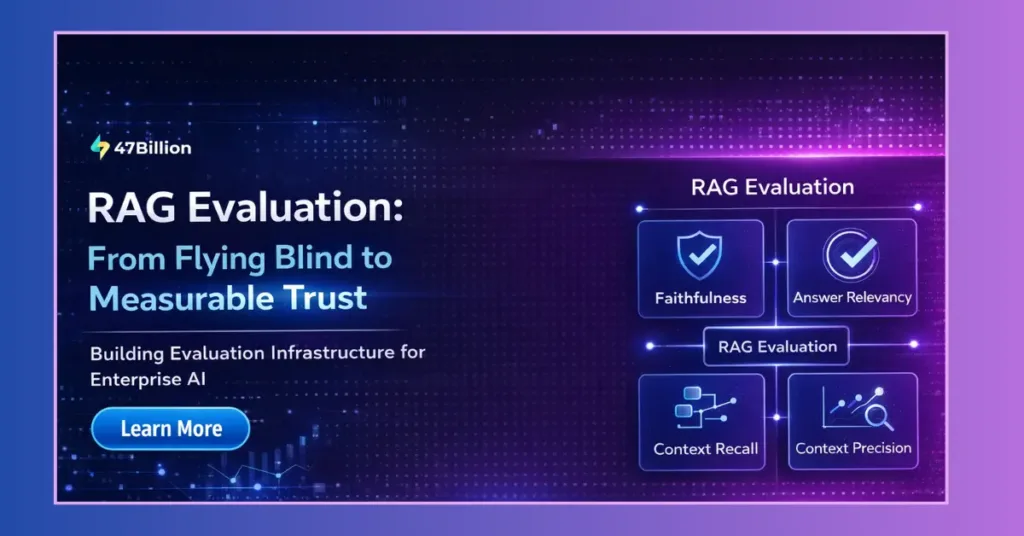

The Autonomy Spectrum: From Prompt Chains to Multi-Agent Systems

One of our first realizations was that “AI agents” is not a binary category. There is a spectrum of autonomy, and knowing where your use case falls determines which framework—and how much complexity—you actually need.

Level 1: Prompt Chaining

Linear flow. Input goes to an LLM, the output feeds into another LLM call, and so on. Deterministic, predictable, easy to debug. We use this for generating interview questions from job descriptions, then creating evaluation rubrics from those questions. It works. It is boring. And boring is good in production.

Level 2: Workflows with Branching

Conditional logic based on LLM outputs. The system makes decisions, but all paths are predefined. Content generation with quality checks falls here—if the generated content scores below a threshold, it loops back for revision.

Level 3: Tool-Using Agents

The LLM decides which tools to call. This is where the ReAct pattern comes in. More autonomy, but still a single agent. A research agent that decides whether to search the web, query a database, or ask a clarifying question operates at this level.

Level 4: Multi-Agent Systems

Multiple specialized agents collaborating. One researches, another writes, a third reviews. This is the most complex, most powerful, and most unpredictable level. It is also where costs explode and debugging becomes genuinely difficult.

Our recommendation: For most production use cases today, Level 2–3 is the sweet spot. Level 4 is fascinating for demos. It is painful for production.

For structured AI workflows, combining orchestration with strong data engineering foundations becomes critical.

Three Frameworks, Three Philosophies

We did not evaluate frameworks in a vacuum. We built the same systems with different frameworks to understand their real trade-offs. Here is what we found.

AutoGen: Power at a Price

AutoGen’s mental model is conversations. You define agents with specific personas, and they talk to each other to complete tasks. It feels natural when it works. It feels chaotic when it does not.

We built two projects with AutoGen. The first was a table reservation system—a single agent that checks availability, confirms preferences, and books tables. It took about three weeks to build. The second was FinRobot, a multi-agent system for generating annual financial reports, with agents specialized in data retrieval, summarization, and coordination.

What Worked:

- The conversation paradigm is genuinely intuitive for complex, exploratory tasks

- Adding new agents to existing workflows is straightforward

- Excellent support for code execution agents

- Human-in-the-loop is a first-class citizen with configurable input modes

What Did Not:

- Conversations go in circles. Agents keep talking without converging on a solution

- Token consumption is brutally high. A task that takes 1,000 tokens with a simple workflow consumed 5,000+ tokens because every agent sees the full conversation history

- Debugging multi-agent conversations is a nightmare. When three agents disagree, tracing why requires reading pages of generated text

- Agents sometimes called tools repeatedly when once was sufficient

AutoGen is powerful for exploratory tasks where you want diverse perspectives. For deterministic workflows, it is overkill and expensive.

CrewAI: The Pragmatic Middle Ground

CrewAI thinks in tasks, not conversations. You define agents with roles and assign them specific tasks in sequence or hierarchy. It felt more structured, more predictable, and significantly faster to develop with.

We rebuilt the exact same table reservation system with CrewAI. Working version in one week, compared to three weeks with AutoGen. The difference was striking.

What Worked:

- Task-based approach feels production-ready from day one

- Better control over execution flow than AutoGen

- Built-in tool integration is smooth and well-documented

What Did Not:

- Less flexible than AutoGen for dynamic, open-ended scenarios

- Agent memory across tasks was tricky to manage

- Deep customization of agent behavior required workarounds

CrewAI sits between simple workflows and full multi-agent chaos. It is the good middle ground for structured, multi-step tasks. This structured orchestration approach aligns closely with modern AI-driven product development strategies.

LlamaIndex Workflows: The Document Specialist

LlamaIndex takes a fundamentally different approach. It excels at document-heavy, RAG-centric applications. We used it for two projects: a note summarization system and an insurance helper that needed to pull structured data from unstructured documents.

What Worked:

- Excellent for document processing and information retrieval

- Clean abstraction for defining workflow steps

- Event-driven architecture makes adding logging, retries, and error handling natural

- Tight integration with RAG infrastructure is its primary strength

What Did Not:

- Documentation for advanced use cases is sparse

- Debugging complex workflows required significant custom tooling

- Error handling needed extensive custom work

- Not the right choice for pure orchestration—it is designed around data retrieval

Use LlamaIndex when your agent’s primary job is finding, organizing, and synthesizing information from documents. For everything else, there are better options.

Side-by-Side Comparison

| Aspect | AutoGen | CrewAI | LlamaIndex |

| Best For | Exploratory multi-agent collaboration | Structured multi-step tasks | RAG-heavy document workflows |

| Learning Curve | Steep | Gentle | Medium |

| Production Readiness | Needs heavy guardrails | Good for structured workflows | Good for RAG use cases |

| Cost Efficiency | Poor (high token usage) | Medium | Good (focused workflows) |

| Debugging | Hard (multi-agent chaos) | Good (structured tasks) | Medium (event-driven helps) |

A note on framework similarities: while each has unique terminology and abstractions, they fundamentally solve similar orchestration problems. The real differentiator is the level of abstraction. Frameworks with fewer layers between you and the LLM offer lower latency and more control. Higher-level frameworks trade some of that for faster development. Choose based on where your use case sits on the autonomy spectrum.

Production Case Study: AI Sales Training for a Global Insurance Company

Our most significant learning came from a production deployment—an AI-powered sales training simulator built for a global insurance company. Two agents work together: one plays the role of a customer (a financial professional with configurable personality traits), while the other acts as a real-time coach for the sales trainee.

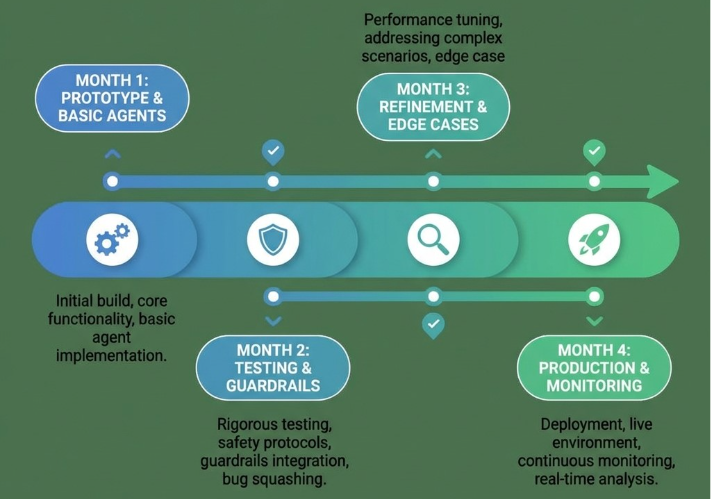

Users adjust settings like personality type, difficulty level, and conversation style. The system creates dynamic training scenarios that adapt to each session. Building this took four months from start to production-ready.

Six Hard Lessons from Production

1. Agents Need Brutally Clear Boundaries

In earlier prototypes, agents would call the same tool multiple times when once was enough. In one case, an agent attempted to use a function that seemed logical but did not actually exist. The fix was explicit constraints: maximum tool call counts, strict validation of available tools, and clear error messages when boundaries are hit.

2. Long Conversations Break Things

Training sessions can run 30–45 minutes. That is a lot of context. The agent needed to track key information without getting overwhelmed by conversation length. We implemented smart summarization—keeping critical context while pruning redundant exchanges.

3. Unexpected Inputs Are the Norm

Sales trainees say things no test suite anticipates. The agent needed graceful handling of off-topic questions, emotional responses, and conversational tangents. This required extensive prompt engineering and iterative refinement.

4. Cost Monitoring Is Non-Negotiable

Multi-agent conversations are token-hungry. Without monitoring from day one, costs would have exceeded projections significantly. We set up real-time tracking with alerts at 80% of budget thresholds.

5. Response Time Variability Matters

Sometimes the agent responded in under a second. Sometimes it took four seconds. The variability was more disruptive than consistent slowness. We added loading indicators, background processing for non-critical tasks, and timeout limits.

6. Refinement Never Ends

We spent more time adjusting agent behavior than building the initial system. Small changes in system prompts produced noticeably different conversation patterns.

For client-facing production systems, the framework’s level of control matters more than its speed of development. Four months is realistic for a production-grade agent system. Anyone telling you otherwise has not shipped one.

Human in the Loop: The Pattern Nobody Wants to Talk About

Every agent framework demo shows autonomous operation. Reality demands human checkpoints. This was perhaps our most important learning: Human in the Loop (HITL) is not a limitation of agent systems. It is a requirement for trustworthy ones.

Why HITL Matters

- Trust building. Users do not trust fully autonomous agents yet. And honestly, they should not. HITL builds confidence gradually

- Error recovery. When an agent goes off track, a human catches it before it cascades into bigger problems

- Compliance and audit. In regulated industries—finance, healthcare, education—you need to show that a human approved critical decisions

- Quality control. For content generation, human review ensures output meets standards before it reaches end users

Four HITL Patterns We Used

| Pattern | When to Use | Example |

| Approval Gates | Before irreversible actions | Human approves before final report generation |

| Review & Edit | For content quality | Trainer reviews AI-generated training scenarios |

| Escalation | When agent confidence is low | Agent routes to human when uncertain |

| Feedback Loop | For continuous improvement | Users rate agent responses; system learns |

Framework Support for HITL

- AutoGen: Built-in human_input_mode with ALWAYS, TERMINATE, or NEVER options

- CrewAI: human_input=True parameter on tasks requiring approval

- LangGraph: Explicit human nodes in the workflow graph

- OpenAI Agents SDK: Handoff primitives that can route to human agents

Our production experience confirmed this pattern’s importance. In the insurance company deployment, we initially launched with minimal human oversight. Users quickly reported inconsistent training scenarios. After adding review checkpoints for scenario generation and periodic quality audits, satisfaction improved dramatically.

The principle is progressive autonomy: start with more human involvement, then gradually reduce it as the system proves itself. Not the other way around.

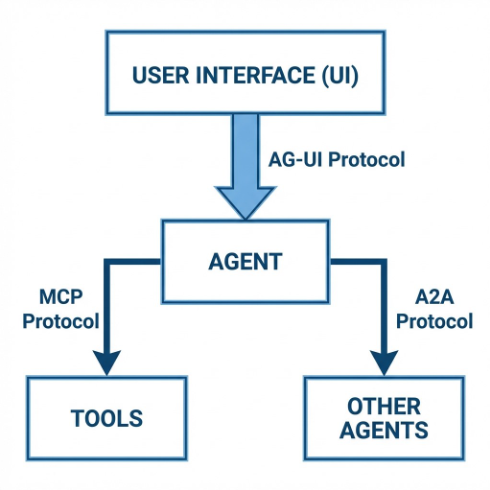

The Emerging Protocol Stack: MCP, A2A, and AG-UI

Beyond frameworks, 2025 saw the emergence of standardized protocols that will shape how agents are built and connected. Understanding these is critical for architectural decisions made today.

MCP: Model Context Protocol

Released by Anthropic in late 2024, MCP is the standard for how agents connect to tools. Think of it as USB for AI tools—a standardized way for any agent to discover and use any tool, without custom integration code.

- Servers expose tools, resources, and prompts

- Clients are AI applications that consume those capabilities

- Protocol uses standardized JSON-RPC communication

- Already supported by Claude Desktop, Cursor, Zed, and growing rapidly

Why it matters: If you build MCP servers for your internal APIs, any MCP-compatible agent can use them. Instead of writing custom connectors for every framework, you implement once.

A2A: Agent-to-Agent Protocol

Launched by Google in April 2025 and donated to the Linux Foundation in June 2025, A2A addresses a different problem: how agents built by different organizations communicate with each other. Over 150 organizations now support the protocol, including Salesforce, SAP, ServiceNow, and Atlassian.

| Protocol | Purpose | Analogy |

| MCP | Agent ↔ Tool communication | How an agent talks to APIs and databases |

| A2A | Agent ↔ Agent communication | How agents from different orgs collaborate |

A2A introduces the concept of Agent Cards—JSON files describing an agent’s capabilities, like a business card for AI. Agents discover each other, negotiate interaction modalities, authenticate, and delegate tasks through a structured protocol built on HTTP, SSE, and JSON-RPC.

A practical example: imagine a university’s assessment agent that needs plagiarism checking. Via A2A, it discovers a specialized plagiarism-detection agent, delegates the task, and receives results—all without custom API integration.

AG-UI: Agent-User Interaction Protocol

Launched by CopilotKit in May 2025, AG-UI addresses the final gap: how agents communicate with user interfaces. While MCP handles agent-to-tool and A2A handles agent-to-agent, AG-UI standardizes agent-to-frontend communication.

- Event-based protocol over HTTP/WebSocket with approximately 17 standard event types

- Bi-directional: UI sends context to the agent, agent streams back to UI

- Built-in support for streaming, state synchronization, tool visualization, and human-in-the-loop approval workflows

- Already integrated with LangGraph, CrewAI, Mastra, and Microsoft Agent Framework

How They Fit Together

These protocols are complementary, not competing. A well-architected agent system uses all three:

- MCP to connect to tools (databases, APIs, search)

- A2A to collaborate with external agents (partner systems, specialized services)

- AG-UI to communicate with users (real-time feedback, approval workflows)

This is where the industry is heading. Teams that adopt these standards early will spend less time on custom integration and more time on their actual product.

The Broader Ecosystem: What Else Should You Know?

OpenAI Agents SDK

Released in March 2025, this is OpenAI’s official agent framework. It takes a minimalist approach with just four core primitives: Agents (LLMs with instructions and tools), Handoffs (delegation between agents), Guardrails (input/output validation), and Tracing (automatic observability). It is provider-agnostic despite the name—works with 100+ LLMs via the Chat Completions API. If you are already in the OpenAI ecosystem, this is worth evaluating as a simpler alternative to AutoGen.

LangGraph

LangChain’s graph-based workflow engine. It excels at stateful applications with cycles—workflows that need to loop back, save state, and resume. More verbose than CrewAI but more flexible. Good when you need clear visibility into execution flow.

Parlant

An open-source framework by Emcie (Apache 2.0 license), focused specifically on customer-facing conversational agents. Its key innovation is a Guidelines system—behavioral rules dynamically matched to conversation context, which is more reliable than hoping the LLM follows system prompt instructions. Worth monitoring for applications requiring consistent, controlled agent behavior.

Coding Agents: Claude Code and Cursor

These are production-ready, specialized agents that prove an important principle: agents work best when narrowly scoped. Claude Code handles command-line coding tasks—reading codebases, making multi-file edits, running tests. Cursor is an IDE built around AI pair programming. We use both daily. Their success reinforces our recommendation to start narrow and expand gradually.

Low-Code Options

n8n and Zapier have added agent capabilities and are useful for non-developer teams or rapid prototyping. Limited for complex logic, but valuable for stitching together existing tools quickly.

The Cost Question: What Does This Actually Cost?

This is invariably the first question from leadership, and it deserves a direct answer.

Per-Task Cost Ranges

| Approach | Cost per Task | Tokens per Task | When to Use |

| Simple Workflow | $0.10–$0.50 | 1,000–3,000 | Linear, deterministic tasks |

| CrewAI Multi-Agent | $0.50–$2.00 | 3,000–10,000 | Structured multi-step tasks |

| AutoGen Multi-Agent | $2.00–$5.00 | 5,000–25,000 | Exploratory, collaborative tasks |

| LlamaIndex RAG | $0.20–$1.00 | 1,000–5,000 | Document processing queries |

Production Reliability: Can We Actually Trust This?

This is the honest answer, segmented by complexity level:

| System Type | Production Ready? | What It Needs |

| Simple Workflows | Yes | Error handling, input validation, monitoring |

| Tool-Using Agents | Yes, with guardrails | Output validation, cost limits, fallbacks |

| Multi-Agent (structured) | Cautiously yes | Heavy guardrails, HITL checkpoints, progressive rollout |

| Multi-Agent (open-ended) | Not yet | Still too unpredictable for critical paths |

Our Reliability Playbook

- Structured outputs with validation. Never trust raw LLM output for anything that reaches end users. Parse, validate, and constrain

- Conservative temperature settings. Lower temperatures for deterministic tasks. Creativity is the enemy of reliability

- Tool use constraints. Agents cannot make up APIs. Strict whitelisting of available tools prevents hallucinated function calls

- Progressive rollout. Pilot with internal users, then beta with select customers, then general availability. Monitor at each stage

- Iterative refinement. Our insurance company deployment started at 85% accuracy and reached 95% after two months of continuous tuning. Plan for this timeline

Integration: How Agents Fit Into Existing Architecture

For teams running microservices—as we do with 33 FastAPI services on AWS EKS—agents are not replacements. They are an orchestration layer that sits above your existing services.

- Agents call your existing APIs to perform actual work. They coordinate, they do not replace

- MCP provides a standard way to expose your microservices to agents without custom connectors

- Agents handle the reasoning and sequencing. Your services handle the execution

- This means your existing test suites, monitoring, and deployment pipelines remain intact

The practical implication: you do not need to rewrite anything. You need to add an orchestration layer that intelligently calls what you have already built.

Security and Compliance: What Leadership Needs to Know

Agent systems introduce new security considerations that traditional API architectures do not face:

Prompt Injection

Agents that process user input are vulnerable to prompt injection—malicious inputs designed to override the agent’s instructions. Mitigation includes input sanitization, system prompt hardening, and output validation layers that check for policy violations before delivery.

Data Exposure

Multi-agent systems pass context between agents. Sensitive data in one agent’s context can leak to another. Solution: explicit data classification, context filtering between agents, and audit logging of all data flows.

Cost Attacks

Malicious users can craft inputs that cause agents to enter expensive loops—repeatedly calling tools, generating excessive tokens, or creating runaway costs. Solution: per-request cost limits, maximum iteration counts, and real-time budget monitoring.

Compliance Considerations

- Audit trails: Every agent decision, tool call, and output should be logged

- Data residency: For regulated industries, self-hosted LLMs or region-specific endpoints may be required

- Role-based access: Not all team members should be able to modify agent behavior. Prompt management tools with RBAC are essential

- Reproducibility: Agent behavior should be versioned. When something goes wrong, you need to recreate the conditions

Seven Lessons from Building Agent Systems

1. Start Simple, Add Complexity Only When Needed

Most use cases do not need multi-agent systems. Simple workflows with well-crafted prompts handle 80% of real-world requirements. We started with single-agent systems and only added agents when the workflow genuinely demanded it.

2. Cost Is Multiplicative, Not Additive

Multi-agent systems do not cost twice as much as single agents. They cost five to ten times as much, because every agent sees the full conversation history. Set up monitoring from day one. This is not optional.

3. Evaluation Is the Hardest Problem

How do you test an agent? The industry is still figuring this out. You need trajectory evaluation (was the reasoning process sound?), not just output evaluation (was the final answer correct?). There is no substitute for realistic testing with real users.

4. Guardrails Are Essential Infrastructure

Output validation, action constraints, cost limits, human approval checkpoints. These are not nice-to-haves. Our FinRobot project caught problems through human checkpoints that automated testing missed entirely.

5. Memory Architecture Matters More Than You Think

Agents without memory are limited. Agents with naive memory are expensive. Smart summarization—keeping critical context while pruning redundancy—made the difference between a usable system and one that degraded over long conversations.

6. The Refinement Phase Is the Real Project

Building the initial agent takes 20% of the total effort. Getting it production-ready takes the remaining 80%. Small changes in system prompts produce dramatically different behaviors. Plan for weeks of iterative refinement, not days.

7. Narrow Agents Beat General Agents

The most successful agent systems we built and used—Claude Code for coding, Cursor for pair programming, our insurance training simulator for sales practice—all had tightly scoped domains. The narrower the scope, the more reliable the agent.

What to Adopt First: A Prioritized Roadmap

If you are a technical leader evaluating agent adoption, here is what we recommend based on our experience:

Phase 1: Quick Wins (Weeks 1–3)

- Identify two to three use cases where you are doing multi-step LLM work manually

- Pilot CrewAI for structured content generation tasks. You will have a working demo in one week, production-ready in three

- Set up cost monitoring infrastructure before anything else

- Start documenting internal APIs that could become MCP servers

Phase 2: Production Hardening (Weeks 4–8)

- Add LlamaIndex for document processing and RAG-heavy workflows

- Implement HITL patterns for all content that reaches end users

- Build evaluation framework combining automated checks with human review

- Evaluate OpenAI Agents SDK as a potential simplifier for new projects

Phase 3: Advanced Capabilities (Months 3–6)

- Consider multi-agent architectures for genuinely complex use cases like dynamic interview simulation

- Adopt MCP for standardized tool integration across your services

- Evaluate A2A for partner integration scenarios

- Explore AG-UI for standardized frontend-agent communication

What Not to Do

- Do not start with multi-agent systems. Start with simple workflows and graduate

- Do not skip cost monitoring. You will regret it within the first month

- Do not expect production quality from a demo. Budget four to six months for complex agent systems

- Do not build custom integration code when standards exist. MCP, A2A, and AG-UI exist for a reason

What Is Next: The Agent Landscape in 2026

The agent ecosystem is maturing rapidly. What was experimental six months ago is becoming production-grade. Based on what we have seen, here are the trends we expect:

- Protocol convergence. MCP, A2A, and AG-UI will become standard infrastructure. Custom integrations will feel as outdated as hand-written HTTP parsers

- Specialized over general. The most successful agents will be deeply integrated into specific workflows, not general-purpose assistants

- Evaluation tooling. The biggest bottleneck today is testing. Expect significant investment in agent evaluation frameworks, trajectory analysis, and automated quality assurance

- Cost optimization. Smaller, specialized models for specific agent tasks. Not every agent needs GPT-4—a fine-tuned smaller model often performs better for narrow tasks at a fraction of the cost

- Human-agent collaboration. The future is not fully autonomous agents. It is agents that make humans more effective, with clear boundaries and transparent reasoning

Building Agent Systems? Let’s Talk.

At 47Billion, we have built agent systems across education, insurance, and enterprise domains. We have made the mistakes so you do not have to.

If you are evaluating AI agents in production for your organization, explore our AI/ML consulting services or connect directly with our team.

- Framework selection based on your specific use case and technical constraints

- POC development to validate agent feasibility before committing to full builds

- Production hardening of existing agent systems—guardrails, monitoring, HITL patterns

- Architecture design for agent integration with existing microservices infrastructure

- Protocol adoption guidance for MCP, A2A, and AG-UI implementation

Get in touch: hello@47billion.com