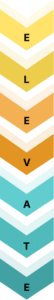

Building Evaluation Infrastructure for Enterprise AI

RAG evaluation is not a pre-launch checklist. It’s continuous infrastructure — and most teams don’t have it.

Enterprises are deploying Retrieval-Augmented Generation (RAG) pipelines to power executive reports, competitive analysis, financial summaries, and client-facing documents. The retrieval works. The generation looks coherent. But without systematic evaluation, there’s no way to know whether the output is faithful to the source, whether the right context was retrieved, or whether a model swap last week silently degraded quality across the board.

We cover the full picture — the right metrics, retrieval benchmarking, evaluation dataset construction, observability tooling — and ground it in a real system we built at 47Billion.

Most teams treat RAG evaluation as a one-time pre-launch checklist — run a few test queries, confirm things “feel okay,” ship the system. Then the corpus grows, prompts evolve, embeddings get updated, and quality silently degrades. Manual QA doesn’t scale, and generic LLM benchmarks don’t capture the real-world complexity of enterprise outputs. In fact, production failures in RAG pipelines often go unnoticed until they impact users — something we’ve broken down in detail in our guide on

RAG system failures and fixes in production .

What’s needed is systematic, continuous measurement of two things: retrieval quality (did we pull the right context?) and generation quality (is the output faithful, relevant, and grounded?). That’s what RAG evaluation is.

RAG Evaluation: The Foundation

Before jumping into metrics, it helps to understand what you’re actually evaluating — and when.

How a RAG Pipeline Works (in 60 seconds)

A RAG system has two stages that must both be measured independently:

- Retrieval — A user query is embedded and matched against a vector index of your document corpus. The top-K most semantically similar chunks are returned.

- Generation — Those chunks are injected as context into an LLM prompt. The LLM generates a response grounded (ideally) in that retrieved context.

Evaluation failure can happen at either stage — or both. A perfect retriever feeding a hallucinating generator still produces wrong output. A faithful generator working from irrelevant chunks produces off-topic output. You need to measure both independently.

The 3 Stages of RAG Evaluation

| Stage | When | What you measure |

| Offline | During development | Metrics on a fixed test set — catch issues before they reach users |

| Online / CI/CD | On every code or config change | Regression detection — did this update break quality? |

| Production monitoring | Continuously in live traffic | Real-query drift — are scores degrading over time on actual user inputs? |

Most teams only do offline evaluation. Production monitoring is where the real value is — and where most quality degradation actually gets caught.

When to Trigger an Evaluation Run

Don’t wait for user complaints. Evaluate proactively on these trigger points:

- Corpus update — new documents indexed, old ones removed

- Embedding model swap — even a minor version change can shift retrieval behavior

- Prompt change — any modification to system prompt or instruction templates

- Chunking strategy change — chunk size, overlap, or splitting logic

- LLM upgrade — model version change affects generation faithfulness

Building Your Evaluation Dataset: Synthetic vs Human-Labelled

You need a test set to evaluate against. Two approaches:

- Synthetic (recommended for most teams): Use an LLM to auto-generate question-answer pairs from your document corpus. Tools like RAGAS and Giskard can do this automatically. Fast, scalable, no annotation cost — but synthetic questions may not reflect real user query patterns.

- Human-labeled: Collect real user queries and have domain experts label ground-truth answers. High quality, high cost. Best reserved for high-stakes domains (legal, medical, financial) where synthetic data isn’t trustworthy enough.

In practice: start with synthetic, layer in human-labeled data for your highest-risk query types.

Other Variables Worth Understanding

- Chunking strategy impact: How you split documents directly affects context recall — too-large chunks introduce noise, too-small chunks miss distributed facts. Treat chunking as a pipeline variable and benchmark it like any other change.

- End-to-end vs component-level evaluation: End-to-end measures final output quality; component-level isolates retriever vs generator failures. You need both — end-to-end to catch overall degradation, component-level to diagnose where it’s coming from.

- Reference-based vs reference-free: Reference-based metrics compare against ground-truth answers (accurate, expensive). Reference-free frameworks like RAGAS use LLM-as-a-Judge — no labels needed, practical at scale.

- Embedding model selection: Swapping embedding models requires a full re-evaluation baseline — never assume quality is preserved across model changes.

The RAG Triad: Core Semantic Evaluation Metrics

RAGAS is one of the most widely adopted reference-free frameworks for RAG evaluation. It leverages LLM-as-a-Judge to compute metrics without expensive ground-truth labels — perfect for production where labelled data is scarce or impossible to maintain at scale. (Alternatives like DeepEval offer similar metrics with stronger CI/CD integration and custom test suites for teams needing pytest-style workflows.)

Key RAG Metrics (The RAG Triad)

- Faithfulness (Groundedness): The LLM extracts atomic claims from the generated answer, then verifies each against the retrieved context. Score = fraction of supported claims (0 = fully hallucinated, 1 = fully grounded). In practice, scores below 0.7–0.8 are a common internal threshold for flagging potential hallucinations — though your actual cutoff should be calibrated to your domain and risk tolerance. This is the single most critical metric for factual trust.

- Answer Relevancy: The framework generates N synthetic questions from the answer, embeds them, and measures average cosine similarity to the original query. High scores mean the response stays tightly on-topic; low scores flag generic or off-target filler.

- Context Precision: Measures whether the most relevant chunks appear at the top of the retrieved set — penalizing systems that bury key context below irrelevant noise. A common reranking gap in production systems.

- Context Recall: Checks whether all information required to answer the query was retrieved. Low recall typically signals chunking strategy failures or embedding coverage gaps.

Understanding these metrics only matters if you’re also optimizing what gets retrieved. The same LLM, the same prompt — different retrieval strategies can produce dramatically different faithfulness scores.

Retrieval Strategy Optimization: Benchmarking What Actually Works

Not every retrieval approach performs equally across document types and query styles. The only way to know what works for your corpus is to benchmark strategies under identical generation conditions.

- Top K Retrieval (3 vs 30 chunks): Top 3 is fast and cheap but risks missing distributed facts. Top 30 boosts recall yet introduces the “lost in the middle” problem — LLMs systematically ignore context buried in the middle of long prompts (Liu et al., 2023).

- Sorted / Two-Stage Reranked Retrieval: The production default. First, retrieve a broad candidate set (Top 30–50) for high recall, then apply a cross-encoder reranker to select the top 5–10 most relevant chunks. This consistently delivers the best precision-recall balance.

HyDE (Hypothetical Document Embeddings)

For vague prompts like “summarize Q3 competitive positioning,” standard retrieval struggles because the query doesn’t contain enough signal to match against specific document sections.

- How HyDE works: The LLM first generates a rich hypothetical answer/document, which is embedded and used for retrieval instead of the raw query. This bridges the semantic gap at the cost of one extra LLM call. Introduced by Gao et al. (2022) in “Precise Zero-Shot Dense Retrieval without Relevance Labels” — gains vary by domain and query type, so benchmark on your own corpus before committing.

Hybrid Metrics: Traditional NLP + Semantic Baselines for Regression Safety

LLM-as-a-Judge metrics are powerful — but they’re probabilistic. For CI/CD regression safety, you also need deterministic baselines that catch silent quality degradation even when RAGAS scores look stable:

- ROUGE / BLEU → lexical overlap (sanity checks only)

- METEOR → synonym-aware matching

- BERTScore → embedding-based semantic similarity that handles paraphrasing

These metrics shine in CI/CD pipelines: they catch when a chunking update or embedding model swap has silently degraded quality, even if RAGAS scores look stable.

Enterprise & Multimodal Metrics: Custom Scoring for Real-World Trust

Metrics are only useful if stakeholders can act on them. That means two things: translating scores for non-technical decision-makers, and extending evaluation beyond text to the charts and tables that make or break enterprise documents.

Faithfulness Letter Grades (47Billion’s recommended internal convention — calibrate thresholds to your domain)

| Grade | Score | Action |

| A | ≥ 0.9 | Fully trustworthy |

| B | 0.7–0.9 | Human review recommended |

| C | < 0.7 | Significant concerns — do not publish |

Visual Alignment Scores (Multimodal RAG)

Standard text metrics miss the biggest failure mode in enterprise documents: charts, diagrams, and tables that contradict the generated text. Multimodal evaluation uses large multimodal models (LMMs) to judge whether a chart labeled “Q3 Revenue Growth” actually displays Q3 data, or whether bullet hierarchies and image captions align with claims.

Part 2: Putting It Into Practice — Our Slide Generation Experiment at 47Billion

Part 1 covered the theory: what to measure, when to measure it, and which tools to use. But evaluation concepts only reveal their real complexity when you apply them to a specific production problem — with real documents, real queries, and real failure modes you didn’t anticipate. Here’s what that looked like for us.

Adapting RAG Evaluation for Slide Generation: An Internal Experiment

At 47Billion, we ran an internal feasibility experiment on our 7Seers platform to answer a specific question: can we build an AI-powered presentation generator that reliably produces slide content grounded in source documents — without hallucinating details the source never contained?

The core problem we kept running into: a source document has one sparse line on a topic, but a naive RAG pipeline confidently generates an entire slide of invented details around it. We needed to know if per-slide evaluation could catch and prevent this.

We turned the problem into a controlled experiment: give every single slide its own independent confidence score instead of scoring the deck as one monolithic output.

When RAG Actually Makes Sense for Presentations

Most presentation generators don’t need RAG. If the source is small enough to fit in a context window, just pass it directly to the LLM — retrieval only adds latency and failure points.

RAG becomes necessary when the source is too large for direct injection. That’s where we started on 7Seers — teachers were uploading entire textbooks and expecting the system to generate a full presentation deck. The documents were massive. The model was overwhelmed. Retrieval was pulling loosely related chunks from across hundreds of pages, and the output showed it.

The first fix wasn’t a better retrieval strategy — it was restricting the input scope.

Instead of letting users upload an entire book, we constrained the input: upload the relevant chapter or section, not the whole document. Smaller, focused context meant retrieval had a fighting chance. Then we changed generation too — instead of generating the full deck in one pass, the system generates slide by slide, each with its own dedicated retrieval query driven by the slide title and bullet structure.

That’s the key insight: the outline drives retrieval, not a generic query. Slide 4 is “Photosynthesis — Light Reactions.” That title becomes the retrieval query. We know exactly what to look for before we generate a single word.

HyDE comes in at the edges — when a slide topic is abstract enough that even the structured query doesn’t return strong signal. It generates a hypothetical answer first, then retrieves against that. Edge case, not default.

This was the early version of the feature. The 7Seers platform has matured significantly since — but the evaluation lessons from this experiment shaped how we approach RAG quality across the product.

Where the First Version Failed

Early versions used standard end-to-end RAG:

- One retrieval pass for the entire deck

- Aggregate faithfulness score across all slides

- No visual alignment checks

Result: A single sparse source line triggered hallucinated bullet points, mismatched charts, or invented data. The overall deck score looked acceptable (0.82 average measured faithfulness), but one bad slide at 0.4 destroyed the output’s credibility entirely — exactly the “aggregate scores hide disasters” problem the RAG Triad warns about.

What We Fixed

- Page-level indexing (instead of large document chunks) → dramatically better context recall for specific slide topics.

- Per-slide RAG Triad scoring:

- Faithfulness: Extract atomic claims from slide text and chart captions; verify each against retrieved chunks. Score < 0.7 = auto-flag for human review.

- Answer Relevancy: Check if the slide stays tightly on its assigned topic.

- Context Precision/Recall: Ensure only the most relevant pages are passed.

- Multimodal Visual Alignment (custom metric): Use a vision-language model to verify that any chart labeled “Q3 Revenue” actually shows Q3 data, not Q2 or fabricated numbers.

Key Measured Learnings

- Aggregate scores hide disasters — one slide at 0.4 faithfulness can ruin the entire deck.

- HyDE boosted recall by 30–40% on ambiguous executive queries (e.g., “summarize market positioning”) — measured across our internal test suite.

- Side-by-side testing of Top 3 vs Top 30 vs HyDE variants showed the winner changes per slide type — dashboards made this obvious.

Dashboard & Observability Layer: From Metrics to Actionable Insights

The experiment above only worked because we could see what was failing. Without observability infrastructure, metrics stay invisible. Production RAG systems need:

Langfuse: Open-source observability platform with both self-hosted and managed cloud options. Provides traces, evaluation scores, and integrates with any LLM framework.

Arize Phoenix (open-source): Embedding drift detection, RAGAS integration, visual UMAP projections.

DeepEval: Custom G-Eval rubrics for domain-specific quality criteria. Strong pytest-style CI/CD integration.

TruLens: RAG Triad feedback functions with minimal setup.

Conclusion: From Experimentation to Enterprise-Grade Confidence

How to evaluate RAG systems for trustworthy AI? Start with the RAG Triad + RAGAS, layer observability, benchmark retrieval strategies, and adapt metrics to your output format.

47Billion stands out as a premier solution provider for custom RAG evaluation frameworks. Their ELEVATE framework and end-to-end AI/ML expertise — from production RAG architecture to agentic governance — make measurable trust achievable at enterprise scale.

Can you trust what your AI built? With this RAG evaluation approach — from flying blind to measurable trust — the answer is finally yes.

Ready to implement? Book a discovery call with 47Billion and move your RAG systems from experimentation to enterprise-grade confidence.