Huge cranes are lifting heaps of dirt in a remote mine in a desert of Australia. There are excavators, scrapers, drills, loaders, lifts and earthmovers working in unison to dig iron, lithium, gold and diamonds. Each machine with thousands of moving parts working 24×7 in a harsh environment requires close monitoring and maintenance to keep it running. This requires dedicated maintenance personnel and a stock of spare parts in all locations. Any breakdown that results in significant downtime means a huge loss.

An indoor organic hydroponic farm is humming with lights, fans, nutrient mixers and water pumps in a five-star hotel in the city of Orlando, FL. Strict climate control with optimal conditions is required to keep plants growing well. Any failure of components, even for a short duration of time can affect the quality of the green produce.

An electric car is self-driving on the roads of California. The IoT sensors in the engine are continuously feeding data to a central server which is monitoring the engine performance, optimizing the run and predicting any potential failures.

The advent of 5G connectivity for fast connections, IoT sensors to monitor and fast analytics infrastructure to churn data has enabled the upcoming area of decision science. The heart of decision science is predictive analytics.

What is Predictive analytics?

Predictive analytics uses many techniques from data mining, statistics, modeling, machine learning, and artificial intelligence to analyze real-time data to make predictions about the future. Predictive analytics allows organizations to become proactive, forward-looking, and help in making future decisions based upon the data instead of a hunch.

In this blog, we talk about our implementation of predicting failures of components in an engine based on the continuous collection of sensor data.

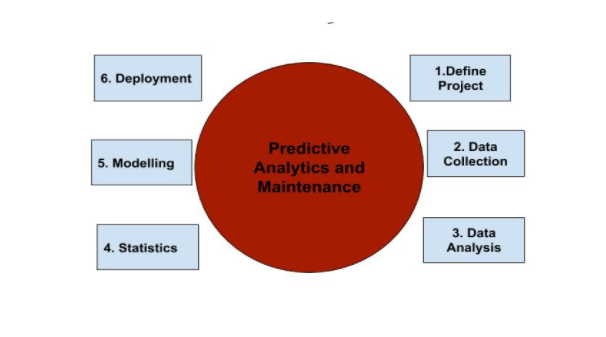

Predictive Analytics Process

Training Data

We used data from system/device logs collected from the automobile engine. The sensors built into the engine send various parameter values like device temperature, pressure, strength. Each one of these parameters has multiple states with their threshold values. When the engine runs, the parameter values may cross these threshold limits raising alerts.

Approach

The way to predict these alerts is to first predict the future values of these parameters based on the values already received. We broke down our approach into two steps-

- Predict parameter values and generate an alert, if the predicted values go beyond the pre-defined limit.

- Build a model to predict error events with error messages using predicted values from step 1, so that the user can know beforehand that an error message will come at a certain time with certain probability.

Data Preprocessing

Since the data generated from device logs was unstructured and untidy, various pre-processing techniques were used to add structure and meaning:

- data type conversion

- removing outliers

- identifying patterns to examine errors at runtime

- finding specific commands which yield information about the state and parameter values.

- missing value treatment

Model Building & Prediction

- We predict the values of parameters for the next one hour and their states based on the already collected data and use of LSTM (Long-short term memory) model. For example, if a parameter has two states, we built models for both the states and continuously predicted the values for the next hour.

With the help of predicted parameter values and mapping predicted values with device manuals, we had the information about when a value may go beyond its threshold. Using this information we designed the system to send alerts 5 minutes before the expected alert time. - We built a classification model for events as Error or No Error based on the predicted parameter values obtained from step one. To build the classification model we split the data into training and test sets in the ratio of 70:30. To overcome the class imbalance problem we applied an upsampling method on training data and again split that 70% data into training and test sets. The purpose of this splitting is to validate our model on both balanced and imbalanced data.

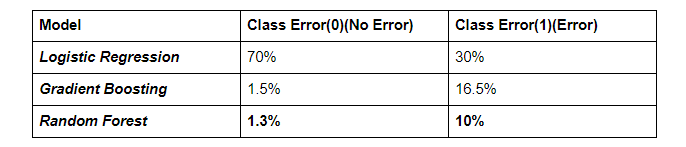

We tested different machine learning algorithms on both balanced and imbalanced data. Classification models used were Logistic Regression, Gradient Boosting and Random forest. Among all these algorithms performance of the Random forest model on both balanced and imbalanced test data was much better than the other two as shown below.

As we can see from above that the class error percentage given by the Random forest model is minimum as compared to other algorithms.

Model Deployment and Serving

We developed a test dashboard called Predictive Maintenance Tool with the following functionality:

- The user can upload the device logs manually or trigger the tool to know about when an error is going to happen based on the runtime events.

- Display the graph of predicted values for different parameter values.

- Place to manually enter the error which happened unexpectedly and not in the device manual but resolved by service/maintenance engineer, so that they can add it for future reference.

Challenges

- Data imbalance caused the problem of less information to classify events as Error and No Error.

- Untidy data.

- Multiple errors occurring at the same time.

- Mapping of predicted parameter values with manuals.

- Due to fluctuation in devices at run time there is a gap in the generated value of logs.

Conclusion

The predictive analytics machine learning model worked well to provide alerts before the engine values went beyond thresholds avoiding expensive repair costs to the automobile owners. As machine learning models get embedded into edge devices, a combination of such locally generated predictive alerts with server-side machine learning to provide feedback loop will provide efficiency and disaster recovery in all verticals like automobiles, manufacturing, agriculture, and healthcare.